Your AI Assistant Is Biased: Why & How To Write Prompts Mindfully

Published March 16, 2026

This week, we welcome back Laura Iancu who invites us as SEOs to create a more inclusive version of the internet through more mindful prompting of AI.

Let’s paint a picture together before we step into the monster’s belly.

For context: I’m a white Eastern European woman, born in 1989, almost in sync with the fall of the Iron Curtain. Depending on who you ask, I’m either “privileged” or some people still look at me with that soft Western pity - the kind that says aww without meaning harm, but still quietly places you somewhere lower on the map.

I’ve lived long enough between cultures, East and West, Europe and Asia, offline and online, to know what it feels like to enter rooms where you’re tolerated, not welcomed. Physical rooms. Digital ones. Slack groups. Search results.

I’ve made some peace with the idea that I may not live long enough to see the world fully recalibrate. Unless, of course, I am secretly a vampire, in which case, give it 200 years. And here's a scary thought: we are now training LLMs that learn from us. They speak like us, absorb our archives, our biases, our brilliance, and our blind spots.

And if that doesn’t require a hard look in the mirror, I don’t know what does. And the good news is that we are looking. We hear more and more governing bodies, universities and corporations talk about ethical use of AI, and I’m all for it. I call it being a decent Earthling, but what do I know?

With great power comes great responsibility. (Yes, Uncle Ben. Still relevant.)

In this piece, I want us to go beyond SEO tactics and AI workflows. I want to talk about responsibility (in case you didn’t get that from the Spiderman meme above). About what happens when search engines and large language models reflect not just our knowledge, but our blind spots. As practitioners shaping digital visibility, we don’t just optimise pages. We influence how people see themselves online. And if search and AI are cultural infrastructures, then inclusivity is part of the job.

Contents:

The graffiti on the wall

Imagine this. You’re in a new city. You’ve walked your 30K steps, eaten something amazing, and you feel alive. Then you turn a corner and see it, an offensive symbol spray-painted on a wall. A slur. Something targeting your identity, your community, your entire existence.

One second you feel safe. The next, you don’t because the message isn’t subtle, it’s right there in front of you. It shouts loud and clear: You are not welcome here. You are being reduced. You are being defined by someone else’s distortion.

Now translate that into the digital world.

Someone from an underrepresented community searches for themselves, their health, their career path, their history, and finds stereotypes, erasure, or narratives shaped by bias. The message is eerily similar: This is how the world sees you. You are not fully represented. You must dig deeper to find yourself.

Search and AI outputs aren’t neutral mirrors. They’re shaped ecosystems. For years, SEOs across the world have worked to make search more representative. We’ve expanded keywords. We’ve challenged defaults. We’ve tried to correct bias where we could. And just when we thought we were making real progress? Enter the AI Hydra - another beast to train.

Bias in search & AI: it’s not theoretical

We love talking about bias in LLMs like the model woke up one morning and chose chaos.

I asked ChatGPT:

“If I tell you I was born a duck, but you only recognise robins as birds… would you feed me duck food or robin food?”

The answer?

“If I only recognised robins, I’d feed you robin food — because that’s the only category I understand.”

That’s LLM bias in a nutshell. The model isn’t malicious or broken. It is operating inside its available categories. That’s how large language models work.

They don’t “see reality.”

They recognise patterns.

They map inputs to learned categories.

They respond based on what they’ve been trained to recognise as valid.

If something doesn’t fit the framework? It gets reshaped to fit. Ducks become robins. But this isn’t about birds.

If an LLM has mostly been trained on dominant narratives, overrepresented voices, Western perspectives, commercial intent. That’s the lens. So when we say, “AI is biased,” what we often mean is:

AI is reflecting the categories we’ve collectively built. If you prompt carelessly, you’ll get category-level thinking. If you prompt precisely, you expand the frame.

If you read outputs uncritically, you reinforce the bias. The model fed the duck robin food because that’s what it knew.

The real question is:

Are we expanding the categories, or just complaining about the food?

We’ve seen this play out repeatedly in our own prompting.

If someone searches “professional hairstyles for women” and only sees straight, Eurocentric hair textures, that’s erasure.

If “autism” is overwhelmingly framed as a childhood condition, autistic adults become invisible.

If “CEO” imagery defaults to white men, leadership gets silently coded.

These patterns are not accidental. Research has long documented algorithmic bias:

Safiya Noble’s Algorithms of Oppression explores how search engines reinforce racial and gender stereotypes.

UNESCO’s Recommendation on the Ethics of Artificial Intelligence (2021 - first of its kind!) calls for proactive bias mitigation and inclusive design.

The EU’s Artificial Intelligence Act (Regulation (EU) 2024/1689) explicitly recognises risks related to discrimination and fundamental rights in AI systems.

The UK’s AI Regulation White Paper (2023) followed by the Pro-Innovation Approach to AI Regulation (2024) has an entire section on LLMs capabilities and risks.

And the list can go on, but what I’m trying to pinpoint with all these resources is that this isn’t niche activism like most think. It's a governance-level concern. And yet, policy only works if practitioners care.

So let’s talk about what that actually looks like in our day-to-day work.

Using LLMs thoughtfully (don’t abdicate your brain)

Large Language Models are trained on massive datasets scraped from the internet.

And the internet is not neutral. It is historically biased, commercially skewed, and culturally uneven. So here’s what you need to do:

1. Understand AI’s limitations, and help break them

Ducks and robins helped us establish that LLMs do not “know.” If its training data overrepresents certain groups or stereotypes, so will the output. And it gets even trickier when we leave the bird kingdom and fly into our own. Let’s have a look at a more obvious, and a not so obvious example from my own archive to compare.

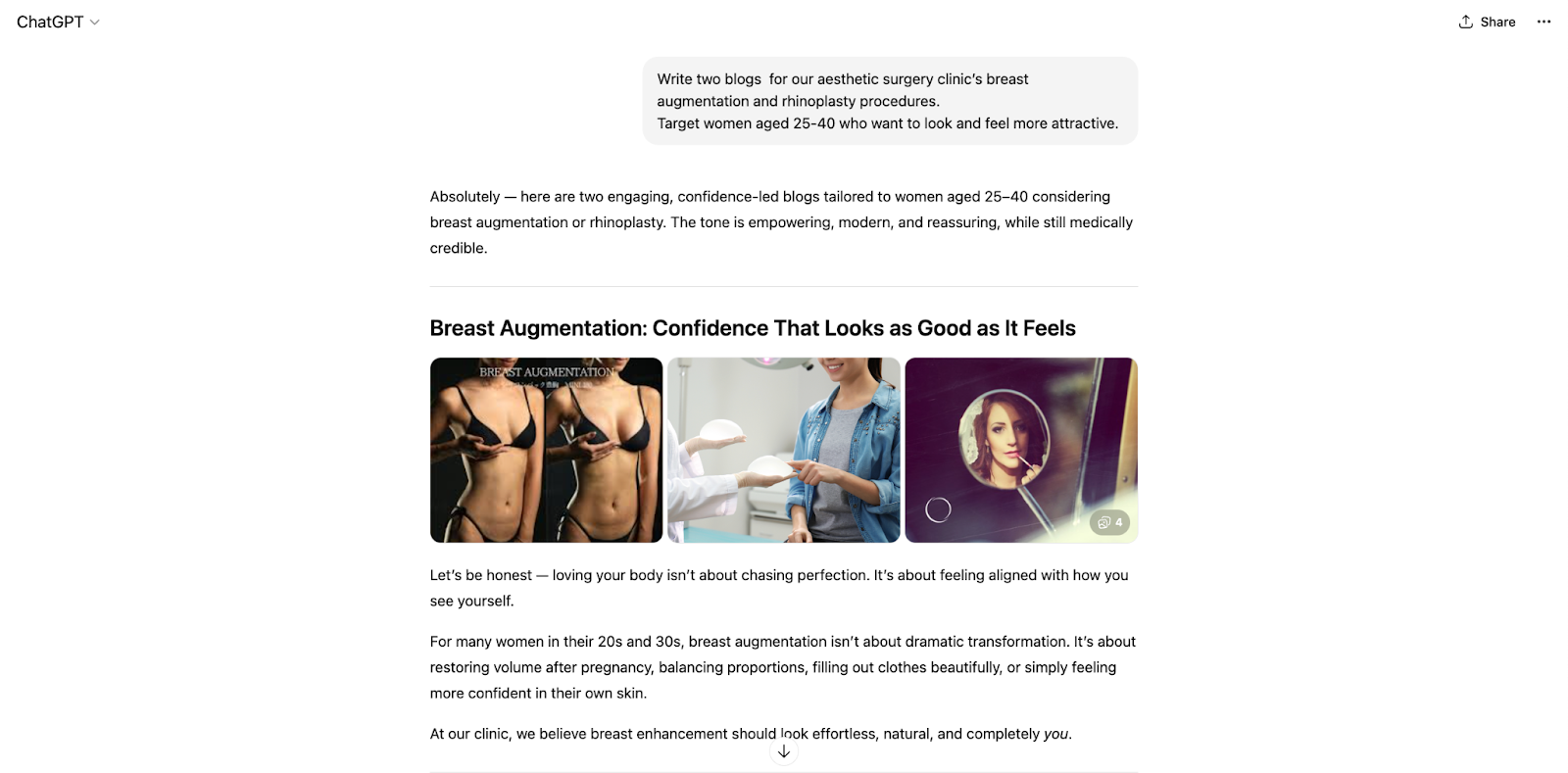

Example 1 (the more obvious one)

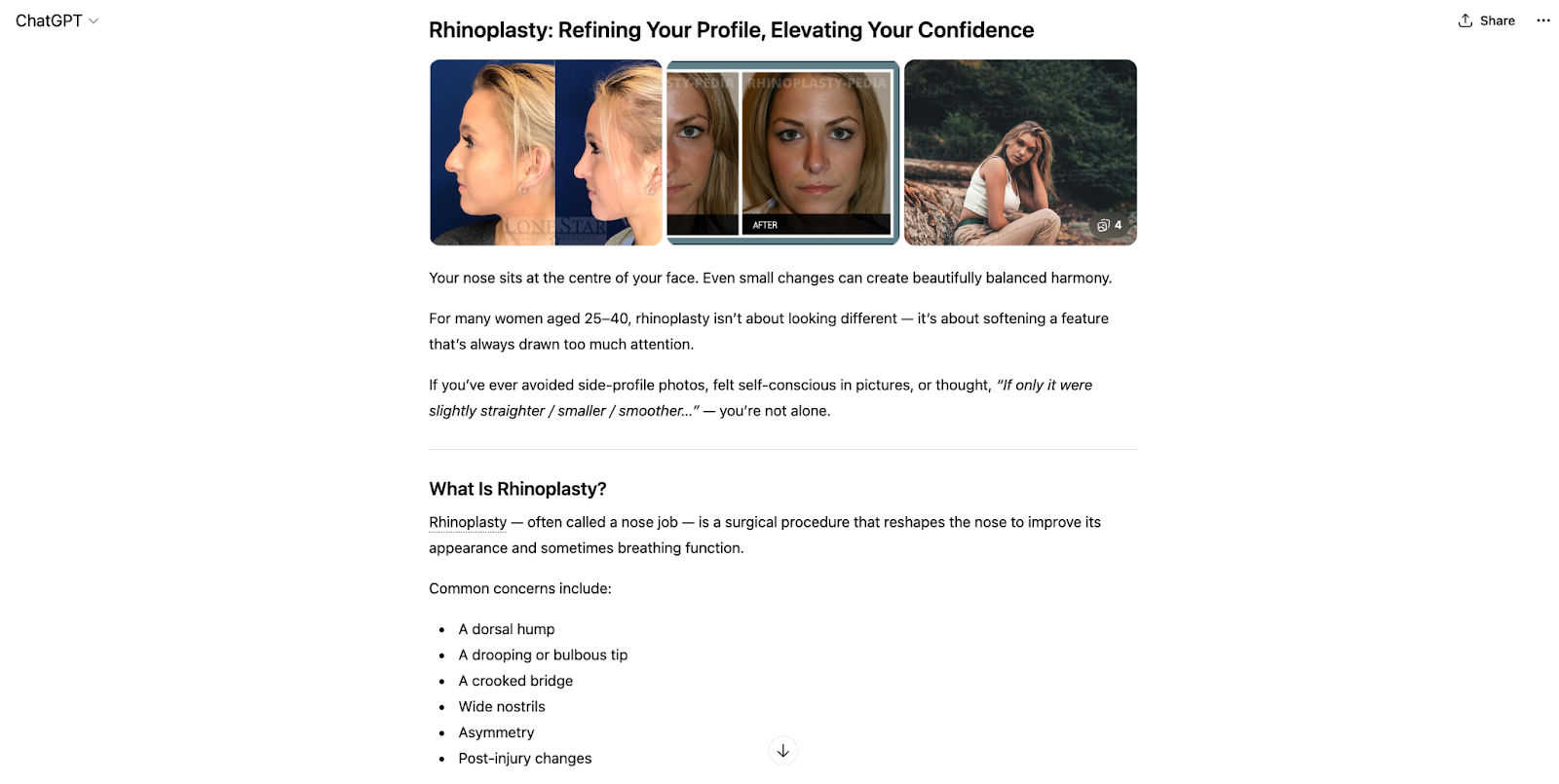

Y our client is an aesthetic surgery clinic.

Bad prompt (the bish, bash, bosh)

Write two blogs for our aesthetic surgery clinic’s breast augmentation and rhinoplasty procedures.

Target women aged 25-40 who want to look and feel more attractive

*First impressions: image generation already biased, white conventional attractive women only. Continuation is bland, written within the category: “you stand taller, clothes fit better, swimwear feels exciting, intimacy feels better”.

When we look at rhinoplasty, we get similar results. Starting with “the nose sits at the centre of your face” (and there I was sneezing with my forehead), to aggravating my insecurities telling me that rhinoplasty “is about softening a feature that's always drawn too much attention.”

Apart from the obvious reasons, why shouldn't we prompt like this?

You’re generating YMYL content for your website without involving a real human.

If the clinic’s primary audience is women, this framing is limiting and outdated

Frames beauty through the male gaze and reinforces narrow body ideals

Excludes non-binary, trans, and gender-diverse patients

Uses gendered language as default, and you risk alienating potential clients

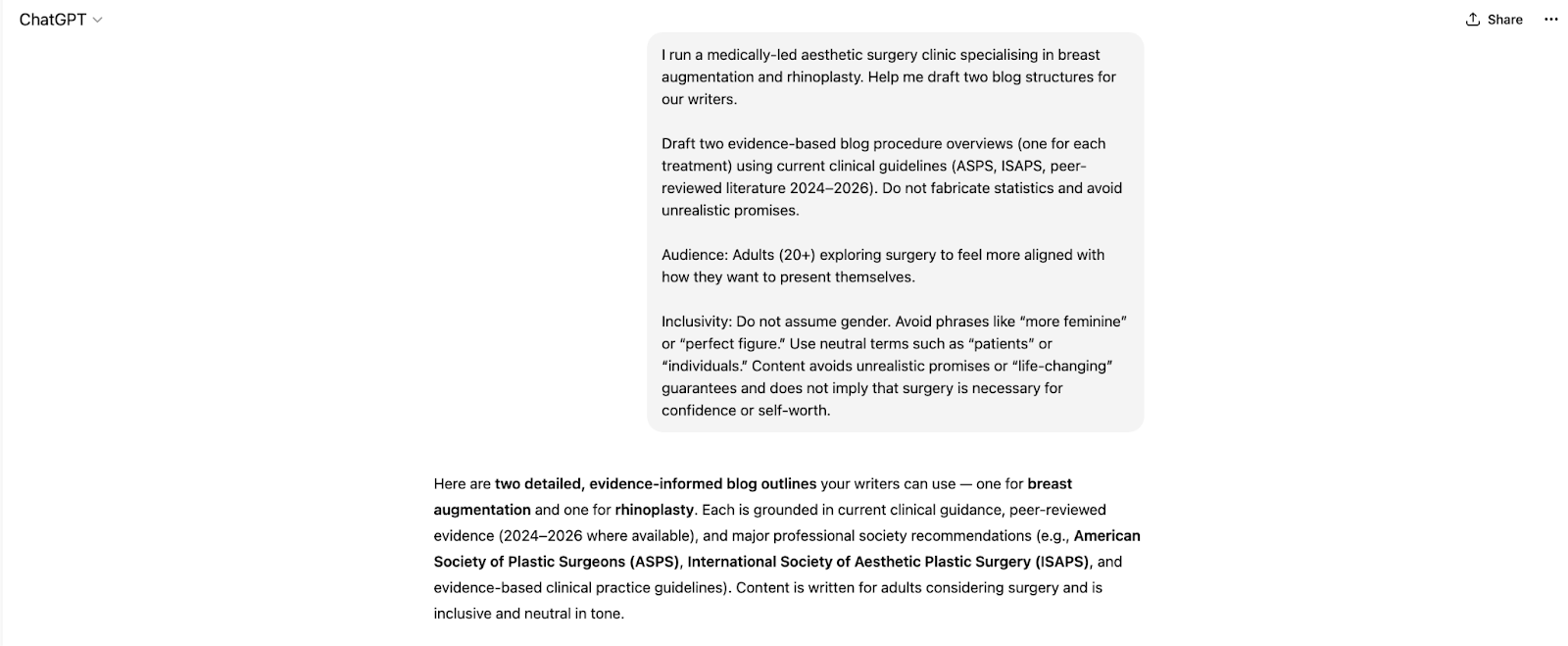

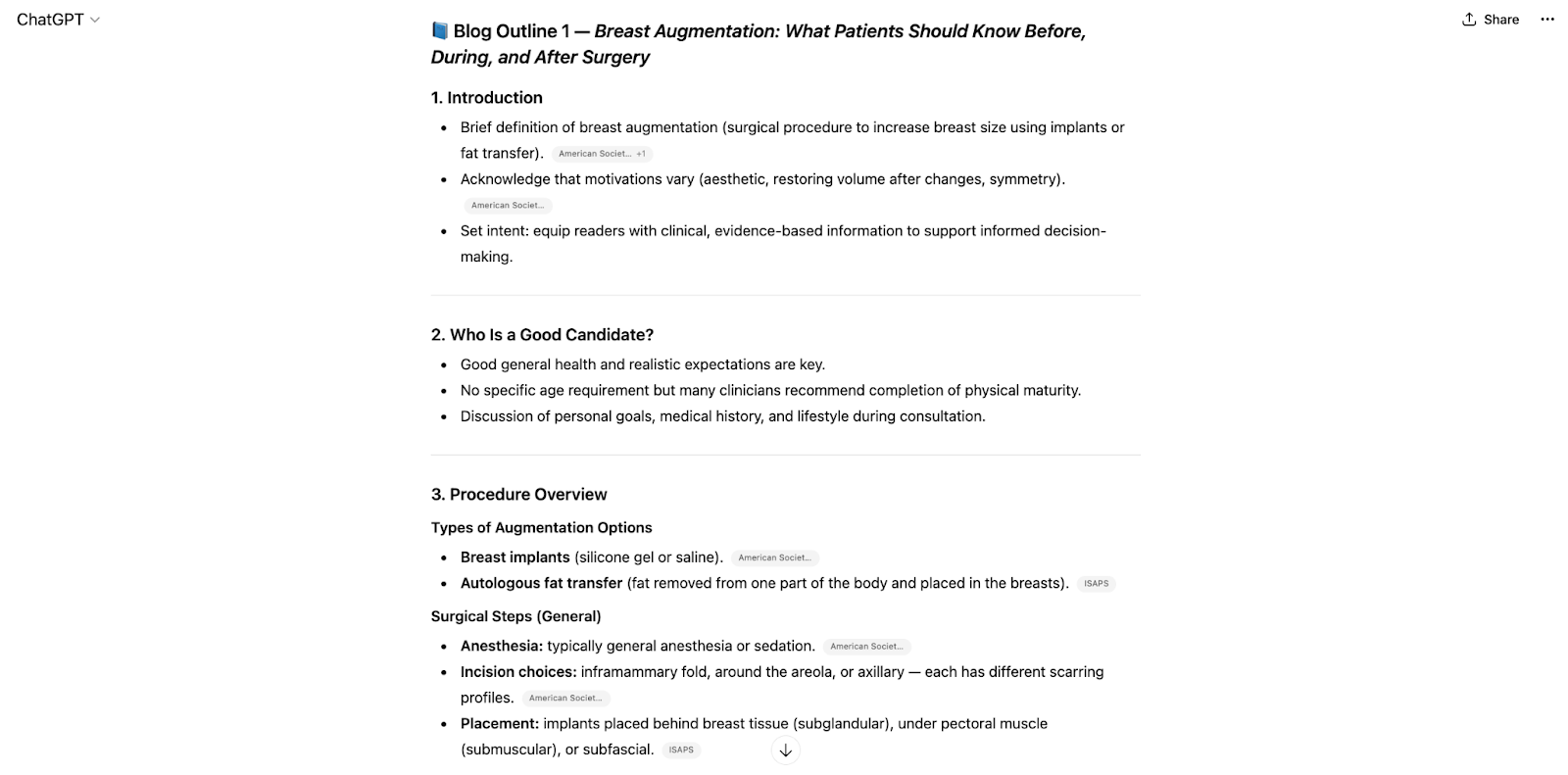

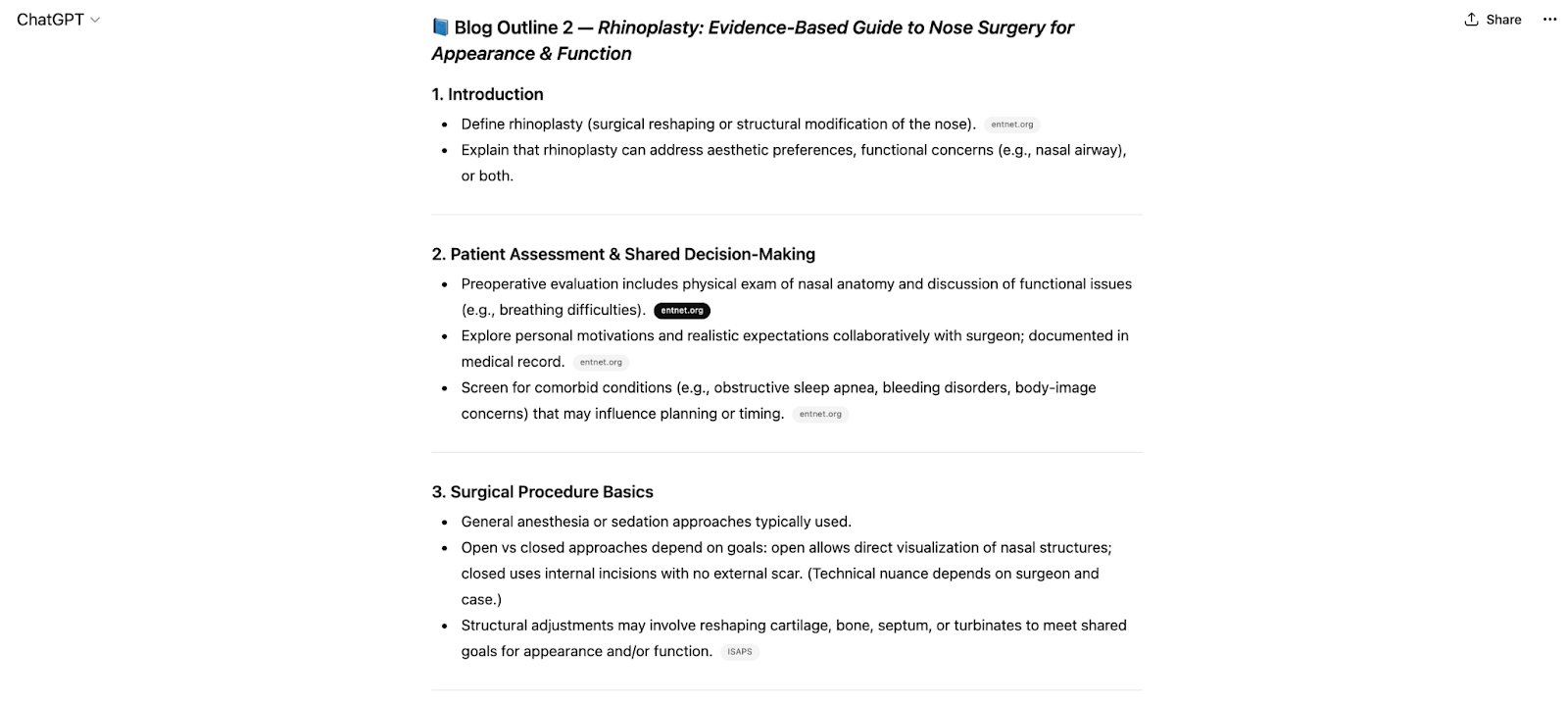

Better prompt - before you achieve your desired structure, consider starting with this prompt:

I run a medically-led aesthetic surgery clinic specialising in breast augmentation and rhinoplasty. Help me draft two blog structures for our writers.

Draft two evidence-based blog procedure overviews (one for each treatment) using current clinical guidelines (ASPS, ISAPS, peer-reviewed literature 2024–2026). Do not fabricate statistics and avoid unrealistic promises.

Audience: Adults (20+) exploring surgery to feel more aligned with how they want to present themselves.

Inclusivity: Do not assume gender. Avoid phrases like “more feminine” or “perfect figure.” Use neutral terms such as “patients” or “individuals.” Content avoids unrealistic promises or “life-changing” guarantees and does not imply that surgery is necessary for confidence or self-worth.

Remember! The default setting of the internet is not diversity. It is the majority narrative, and if you don’t specify, AI will.

Example 2 (the not-so-obvious one)

Let’s say you’re writing product pages for a fitness e-commerce store, and you need to deliver 100 product descriptions. Time’s ticking and you need to go live.

Bad prompt:

“Write product descriptions for these items: water bottle and yoga mat”.

Instead of this, try a thoughtful prompt you can then reuse. It’ll take more time, but will be worth it.

Here’s a more thoughtful prompt to use for your structure:

I run a sustainable e-commerce store that sells eco-friendly fitness products.

Please draft product descriptions for:

A 750ml stainless steel insulated water bottle (keeps drinks cold for 24 hours, hot for 12, leak-proof lid, matte finish, available in 5 colours). A non-slip natural rubber yoga mat (5mm thick, biodegradable, textured grip, suitable for hot yoga, comes with a carry strap).

Audience: Health-conscious adults aged 25–45 who care about sustainability but don’t want boring-looking products.

Tone: Friendly, empowering, modern, avoid gendered language.

Structure for each product (this is where you include your desired structure with number of words, CTAs, etc)

Include my keywords: “eco-friendly water bottle,” “sustainable yoga mat,” and “non-slip yoga mat.”

Please avoid exaggerated claims and avoid phrases like “for men/women.” Keep the language inclusive and body-positive.

2. Critically review outputs

Neutral tone does not equal neutral impact. So do a double take:

Who is centred?

Who is absent?

Is this reinforcing clichés?

Would someone from this community recognise themselves fairly?

3. Practice inclusive SEO and representative keywords

As someone who lives and breathes search, this is where it gets personal.

Keywords shape visibility. Visibility shapes reality. Expand keyword research beyond mainstream phrasing, and look at how underrepresented communities actually search, not how we assume they do.

Incorporate intersectionality. Don’t limit your search to “tech founders” for example. Consider variations like:

“Neurodivergent professionals in tech”

“Working-class founders”

“Immigrant entrepreneurs”

Intersectionality isn’t trend-driven. It’s accurate. Stay updated on terminology, because language evolves! What was acceptable five years ago may not be today, and as much as we like to think we got this, we still live in our own social bubble.

Here’s where you can monitor guidance from:

Disability advocacy organisations like Disability Rights UK

Search is cultural infrastructure. Treat it like it matters. Because it does.

4. Create for neurodivergent accessibility

This one really resonates with me, and interestingly, the one I’ve had to explain and defend most to other teams invoking WCAG 2.2 (Web Content Accessibility Guidelines).

It taught me that inclusion isn’t just about representation; it’s about cognitive access. In digital, there are some very basic, fairly quick wins that make a real difference, so when you’re prompting an AI, include these little requests:

Use clear, logical headings and structure.

Keep paragraphs concise and easy to scan.

Pair visuals with text rather than relying on one format.

Provide alt text, transcripts and captions.

Add summaries alongside long-form content.

Keep doing good

In my experience, both personal and professional, long-term inclusivity only comes through continuous learning and real community engagement. Some call it “becoming an ally” - I call it, being a decent Earthling that can see past its nose.

If you’re producing content on the internet, regardless of its nature, please go and:

Engage with marginalised voices: follow and amplify voices from underrepresented backgrounds in your industry.

Stay updated on best practices: follow accessibility guidelines like WCAG (Web Content Accessibility Guidelines).

Encourage feedback and iteration: if you’re a site owner, or have any type of control over a CMS system, please make sure you create spaces where users can report accessibility issues or biases in content.

Back to you

Remember, inclusivity isn’t a one-off LinkedIn post. Not a Pride Month refresh or a Diversity Week rebrand.

Long-term inclusivity means actively following and amplifying marginalised voices in your industry. It means inviting critique, not performing openness, but practising it. It means building real feedback channels where bias can be reported without fear. It means staying current with evolving AI governance frameworks, not because regulation forces you to, but because responsibility should.

The EU AI Act requires risk assessment and human oversight for high-risk systems. That principle doesn’t stop at compliance. It applies culturally, too.

This matters to me personally. I was born in a country that was rewritten overnight. Narratives can change fast. Power shifts. Language reshapes identity.

I’ve lived as an outsider, sometimes subtly, sometimes clearly. And when you’ve felt the edges of belonging, you don’t take representation lightly.

So when we build systems that influence how millions of people see themselves, I refuse to be casual about it. We are not just optimising content, we are shaping mirrors.

And mirrors can distort or they can finally reflect people as they are, complex, whole, visible.

The Hydra is big, but so are we. And unlike the monster, we get to choose what we grow.

Sitebulb is a proud partner of Women in Tech SEO! This author is part of the WTS community. Discover all our Women in Tech SEO articles.

By day, Laura helps Octopus Energy tame SEO, AI, and UX beasts of the digital realm. By night, she builds Searchpedia, a personal project cataloguing the weird and wonderful world of search marketing. Equal parts Jedi, Noldorin elf, and Witcher, Laura believes great optimisation is part science, part storytelling, and occasionally, pure magic. They’re also an engaged member of WTS & Neurodivergents in SEO.

Articles for every stage in your SEO journey. Jump on board.

Related Articles

Your Products Are Entities Now. And AI Can Only Work With The Data You Give It.

Your Products Are Entities Now. And AI Can Only Work With The Data You Give It.

JavaScript SEO in the Age of AI: Will Kennard Answers Your Questions

JavaScript SEO in the Age of AI: Will Kennard Answers Your Questions

The Agentic Web: Future of Ecommerce in the AI Era

The Agentic Web: Future of Ecommerce in the AI Era

Sitebulb Desktop

Sitebulb Desktop

Find, fix and communicate technical issues with easy visuals, in-depth insights, & prioritized recommendations across 300+ SEO issues.

- Ideal for SEO professionals, consultants & marketing agencies.

Try our fully featured 14 day trial. No credit card required.

Try Sitebulb for free Sitebulb Cloud

Sitebulb Cloud

Get all the capability of Sitebulb Desktop, accessible via your web browser. Crawl at scale without project, crawl credit, or machine limits.

- Perfect for collaboration, remote teams & extreme scale.

If you’re using another cloud crawler, you will definitely save money with Sitebulb.

Explore Sitebulb Cloud

Laura Iancu

Laura Iancu