}

}

Technical SEO for Ecommerce: A Comprehensive Guide

Published 2024-06-28

Today we thank Sam Taylor, Technical SEO Lead at Evolved, who’s provided us with a comprehensive guide to technical SEO for ecommerce.

Getting noticed is half the battle of ecommerce success; that's where technical SEO comes in. By making sure search engines can easily navigate and comprehend your website, you lay the foundations for a successful SEO campaign.

In this guide, you’ll gain the knowledge and tools needed to optimize your e-commerce site for technical SEO.

Soak up all the insights from our latest webinar on SEO for ecommerce businesses! Watch the recording.

Contents:

- Chapter1: Website Architecture and Navigation

- Chapter 2: Page Speed and Mobile Optimization

- Chapter 3: Technical SEO Tools and Resources

Chapter 1: Website Architecture and Navigation

With ecommerce websites, there are multiple components to consider. Not only are there category pages filled with multiple product pages, but a website may have a blog with multiple blog posts, as well as filters to sort and refine URLs. That is why it is crucial to ensure that the architecture of your website works well.

Your website navigation needs to be clear and intuitive, allowing visitors to easily find what they’re looking for. It needs to be scalable, because as your business grows, your website will too – can you easily expand and organize new content and products as your stock increases in size and complexity?

URL Structure

URLs are a simple way for search engines and users to understand your website and how pages are connected to each other.

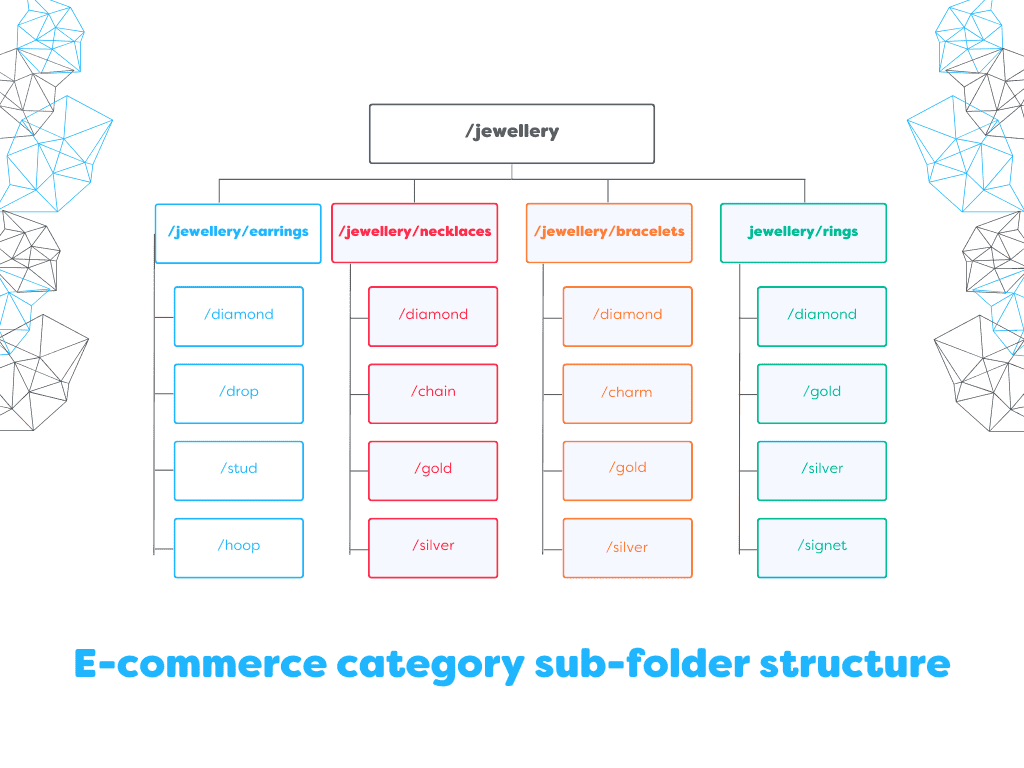

Category URL Structure

You want to avoid using a flat URL structure for categories, for example:

- example.com/tshirts

- example.com/blue-tshirts

- example.com/womens-blue-tshirts

A flat URL structure can make it incredibly difficult for search engines to understand the hierarchy of your website, and how pages should be prioritized. Instead, try using subfolders to categorize your pages:

- example.com/womens

- example.com/womens/t-shirts

- example.com/womens/t-shirts/blue

This URL method allows for clear understanding of the site structure and allows you to create a pyramid structure where all relevant subcategories are housed under the main parent.

To help users refine or sort content, your ecommerce website will more than likely use parameters to dynamically change category content depending on certain attributes, like size or colour, as faceted navigation.

- example.com/womens/t-shirts?size=m

- example.com/womens/t-shirts?size=m&colour=blue

A poll from 2022 found that canonical tags are the most popular way to handle the indexing of these pages, though there are other options, including the use of noindex tags and robots.txt. This is explained in more detail during the sitemap and robots.txt portion of this guide.

It is recommended to avoid internally linking to parameter pages outside of the filters to help Google understand that these pages should not be indexed.

Product URL Structure

Product URLs are a little bit different. You’ll want to avoid using subfolders in your URLs here, as products can fall under two or more categories, meaning you’d have the same product available on multiple pages:

- example.com/womens/navy-blue-womens-t-shirt-with-collar

- example.com/womens/t-shirts/navy-blue-womens-t-shirt-with-collar

- example.com/womens/t-shirts/blue/navy-blue-womens-t-shirt-with-collar

You could try to rely on canonical tags for this by selecting one canonical URL and canonicalizing the rest, but in my experience, it can get quite messy - they can be ignored, and it isn’t going to ensure search engines crawl your site efficiently.

Ideally, product URLs need to be under the root domain with no subfolders used. The path should be descriptive of the product, simple to read and containing keywords where applicable. Some websites also choose to add product SKU in here, especially for large sites where there may be multiple products of similar names. Ultimately, if the URL is readable, easy to understand and free from duplication, it won’t make a huge difference.

- example.com/navy-blue-womens-t-shirt-with-collar

NOTE: Some content management systems like Shopify can add /product/ or /p/ to your product URLs, which should be fine to leave as they are, as they’re not creating duplicate pages. If anything, it just makes your life a little easier when looking at performance in GSC!

If your site uses product variants, Google recommends to also use parameters to handle this, like you would with faceted navigation. They specifically recommend the use of canonicals in this case, where all parameter variants are linked back to the canonical URL, like so:

- example.com/womens-t-shirt-with-collar (canonical URL)

- example.com/womens-t-shirt-with-collar?colour=blue (canonicalized)

- example.com/womens-t-shirt-with-collar?colour=green (canonicalized)

Internal Linking

Even though Google removed PageRank from GSC (formerly Webmaster Tools) in 2009, PageRank is still a metric that Google uses internally.

As it is still a metric used, internal linking is incredibly important on your website. Imagine you have a lot of links to your website, but the page linked has no links to deeper pages or other useful content? You’ve essentially created a blank end for PageRank or ‘authority’ to flow through your website. Try to imagine yourself as a visitor who has clicked on a link; where else would you want to go, and can you see an easy way to get there?

Consider how users and search engines can flow through your website and the journey it involves and allow that to influence how you structure your navigational and contextual links. As technical SEOs, you can also audit your internal links to help improve this process or make it more efficient, removing broken links and links to pages that are canonicalized to another, as well as ensuring that breadcrumbs are in place and working across the site with structured data to support.

A two-month project by Sam Underwood for a jewellery site involved a large audit that focused heavily on resolving internal links with issues – you can find his case study and results here. Initially fixing issues like canonicalized internal links, he also invested time into creating a pyramid site and internal linking structure, where broad pages link down to more specific pages, highlighting the importance and benefit that a strategic internal linking structure can have.

Sitemaps and Robots.txt

Robots.txt and XML sitemaps play pivotal roles in shaping how search engines crawl and index your website. By using these effectively, you can guide search engine crawlers to focus on the most crucial pages, ensure efficient crawling and encourage a better understanding of your site's structure.

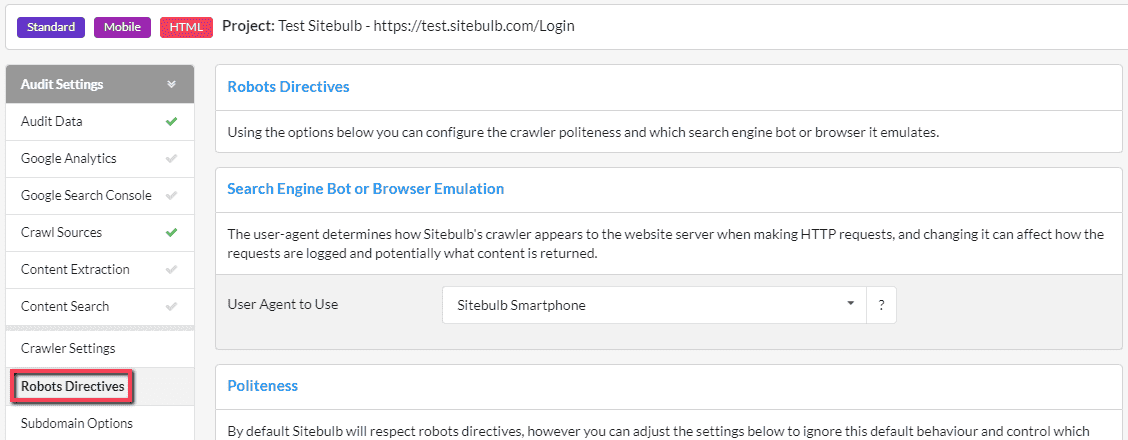

Robots.txt

Large sites may want to limit the number of pages Google can crawl on your website, which is where robots.txt comes in useful. Parameters create several issues with crawling ecommerce sites, sometimes unintentionally, and create thousands – sometimes millions – of page variations.

I spoke at Brighton SEO’s Crawling & Indexation Summit back in 2021 about an ecommerce client we had done this with. We had over 36,000,000 URLs excluded from indexing in Google Search Console, most of which were parameter pages. When compared with the 13,700 valid and indexed URLs, there was a clear issue with crawling on the site and while Google wasn’t indexing these pages, our priority pages weren’t being crawled enough.

We implemented robots.txt rules to prevent Google from crawling category pages with parameters, and the results spoke for themselves:

- Crawl rate dropped from over 1,000,000 URLs a day to approximately 30,000 (a site crawl produced approximately 26,000 URLs with exclusions)

- Number of indexed pages increased from 13,700 to over 22,000.

By implementing exclusions, we were able to ensure search engines can regularly crawl the pages we do want indexed.

Now, something that works for one site may not work for another – and this is something that Technical SEOs need to accept. For example, one site can be fine using canonical tags to handle parameters across the site, whereas another site may have these canonicals ignored by Google and the URLs indexed anyway, resulting in the need to use robots.txt.

Some sites even use noindex tags as a solution instead. As an SEO, it is up to you to decide the best option for your website using the data and information you have available. There is no one-size-fits-all approach, which is often why SEO topics are subject to debate.

Implementing robots.txt rules can be worrying, as sometimes you may block more than intended – I think we’ve all heard a horror story or two about an accidentally disallowed website before. If you want, you can test out your robots.txt rules before going live. Many website crawling tools offer a virtual robots.txt to emulate, including Sitebulb.

XML Sitemaps

XML sitemaps are files you can upload to your website that contain a list of URLs you want Google to crawl. They’re not imperative, Google can still crawl your website without them, but they do provide a good starting point as well as a better understanding of your site structure.

It’s highly recommended to ensure that your sitemap is dynamic and configured correctly to only show 200 (live) URLs and indexable URLs (not URLs that are canonicalized to another URL, or noindexed). Your products may change daily, and your categories will be updated to reflect that, so it is too much work to upkeep this manually.

For websites that don’t change as often, or for smaller eCommerce websites with a niche product line, you may be able to use a manual sitemap. This can be quickly generated following a site crawl using Sitebulb’s XML Sitemap Generator. You can also conduct a sitemap audit with Sitebulb.

One of the best ways Technical SEOs can use sitemaps, especially for larger eCommerce websites with tens of thousands of products and pages, is for analysis. Generally, you don’t need to separate your sitemap into indexes unless the file size exceeds 50MB or 50,000 URLs, but I typically recommend this for ecommerce clients.

Each sitemap can be added to GSC, which allows you to view a URL’s index status more granularly and identify issues quickly. Learn how to create sitemap indexes here.

HTML Sitemaps

You can also add a HTML sitemap to your website, but this is not something that I have looked at for many years. In the last 5 years, I have recommended and implemented it for only one client, who had severe issues with internal linking and mobile friendliness, which we couldn’t resolve easily due to several reasons. So a HTML sitemap helped to bridge that gap in the short-term. Otherwise, it’s something that we skip in audits.

If your website navigation and structure is sound, you shouldn’t need it. Even Google has said “they should never be needed”.

Chapter 2: Page Speed and Mobile Optimization

As essential components of modern SEO strategies, site speed and mobile experience auditing is essential, as these factors can significantly impact users, search engine rankings, and overall website performance.

Page Speed Optimization

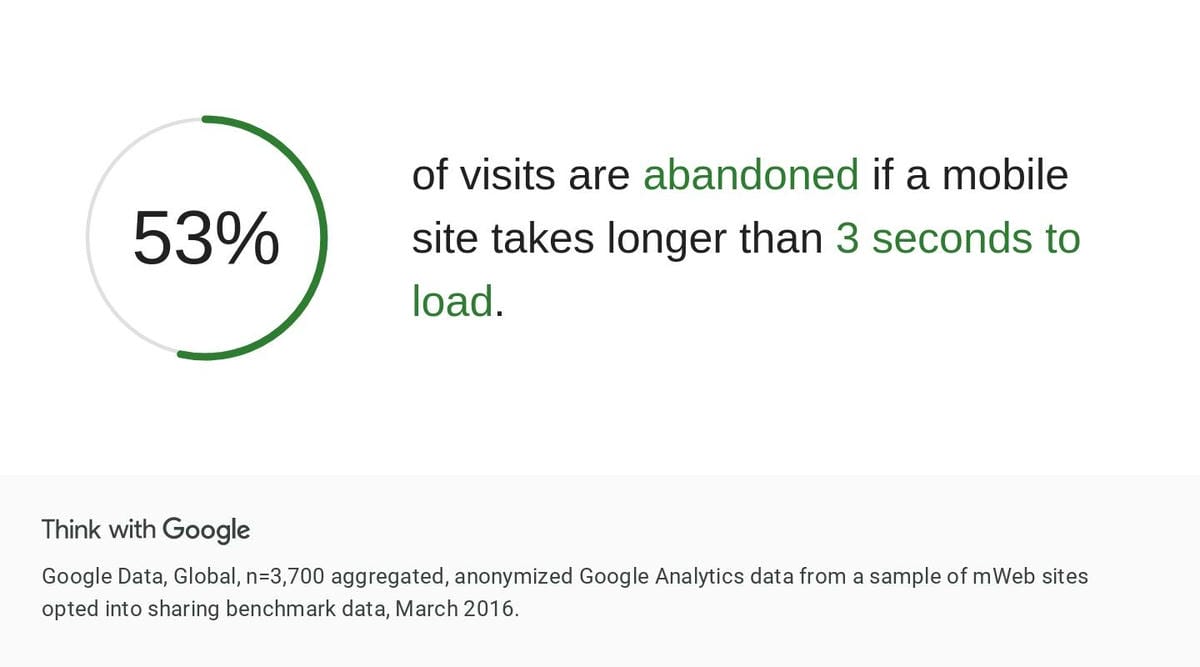

One of the worst experiences you can have as a consumer is to try and use a website and find that it is slow to load, especially on mobile. It is frustrating, annoying, and it can lead to users abandoning their carts and purchasing elsewhere.

According to Shopify, a one second improvement in site speed can boost mobile user conversions by up to 27%, and consumer insights from Google show that 53% of mobile visits are abandoned if the site takes longer than 3 seconds to load. However, user experience and conversions aren’t the only benefit.

Google’s ranking systems take into consideration a variety of signals that align with overall page experience. So while speed isn’t the most important, it certainly can help towards rankings.

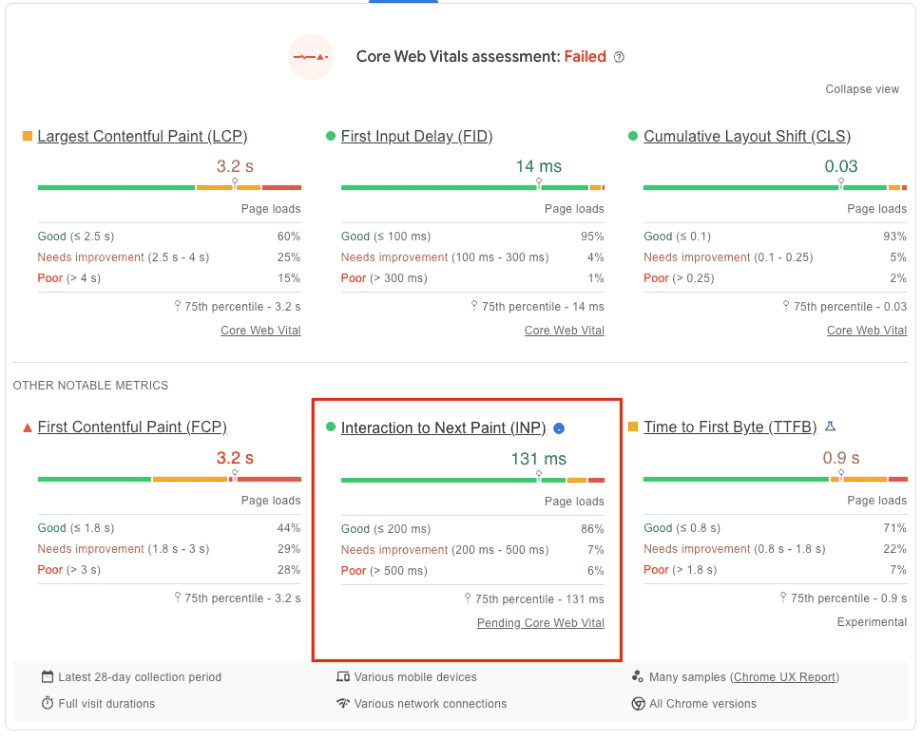

The latest update to Core Web Vitals saw FID replaced with Interaction to Next Paint (INP) in March 2024. INP is a better way to capture elements of interactivity on the web that FID, and in a nutshell, is a metric that assesses a page’s overall responsiveness to user interactions, surveying the latency of all click, tap and keyboard interactions.

Olga Zarr from SEOSLY has prepared a detailed guide on INP, how you can measure it and where to start optimizing your website to improve your score. You might also want to check out Sitebulb’s guide to testing Core Web Vitals.

An added benefit of improving site speed is that it can also increase the number of pages search engines are able to crawl in a specific amount of time, which is especially useful for larger ecommerce websites. If you run a large ecommerce website, or a medium website that changes rapidly, Google has published a detailed guide on how to better manage your crawl budget.

Mobile Optimization

Originally introduced in late 2016, mobile-first indexing is now the standard used for Google and has spent the last few years switching all websites to the mobile-first index. To perform well in search, it is fundamental that your website is mobile-friendly and designed with the mobile user in mind.

Google recommends that websites use a responsive web design as it is the easiest to implement and maintain. One of my tasks during the initial announcements of mobile-first indexing in 2016 was to check the mobile vs. desktop versions of all client websites.

I found a lot of discrepancies between the two, where desktop had been prioritized and things like breadcrumbs, structured data and even page content wasn’t available on the mobile adapted version. A responsive design usually eliminates this, as all content and metadata is the same across all versions of the page.

Google has provided full documentation on mobile sites and mobile-first indexing if you need to review your website and ensure your changes of success.

Chapter 3: Website Crawling and Auditing Tools

Let data inform your decisions, especially when it comes to website changes that can easily impact both user experience and search rankings. Below are some of the best resources I’d recommend for undertaking audits and research.

There are several crawling and auditing tools that you can use to audit your website, and I typically tend to use a range of crawlers in conjunction with each other. Sitebulb is great for all website sizes, and I find the security Hints particularly useful. Screaming Frog SEO Spider is also another tool I frequently use to complete site audits.

To audit site speed and Core Web Vitals, Google PageSpeed Insights is a tried and tested tool. Here, you’ll be able to view your speed score at origin level (average using real-world performance data from the last 28 days), as well as view at a more granular page-by-page level.

Join us next time, when Sam Taylor returns to take us through her tips for ecommerce content optimization.

You might also like:

Sitebulb is a proud partner of Women in Tech SEO! This author is part of the WTS community. Discover all our Women in Tech SEO articles.

Sam has over 10 years of experience in SEO agency-side and has worked in all areas of SEO, from content to technical, specialising in e-commerce websites. She has previously spoken at industry events, including BrightonSEO’s Crawling and Indexation Summit.

Sam Taylor

Sam Taylor