Internal Disallowed URLs

This means that the URL in question is disallowed by a rule in robots.txt.

Why is this important?

Disallowed URLs are not crawlable by search engines, which means that content from disallowed pages is not indexable, and links found only on disallowed pages will also not be reachable by search engine crawlers.

Disallowing URLs is a common method for managing search engine crawlers, so they do not end up crawling areas of a website that you don't want them to crawl (e.g. a user login area). As such, there is potentially nothing wrong with URLs being disallowed, Sitebulb is simply notifying you that they are there.

What does the Hint check?

This Hint will trigger for any internal URL which matches a disallow rule in robots.txt.

Examples that trigger this Hint

Consider the URL: https://example.com/pages/page1

The Hint would trigger for this URL if the website's robots.txt file included a disallow rule that stopped search engines crawling the URL:

Why is this Hint marked 'Insight'?

This Hint is an 'Insight', which means there isn't necessarily any action that needs to be taken - the Hint is intended to alert your attention to something, rather than flagging up an issue that needs fixing. Disallowed URLs are common, and there is often nothing wrong in this situation.

However it is worth double checking that there are no URLs that are being disallowed which should not be disallowed. If there are, then the solution for this issue is to isolate the robots.txt rule(s) which is disallowing the URL, and simply delete these lines from robots.txt.

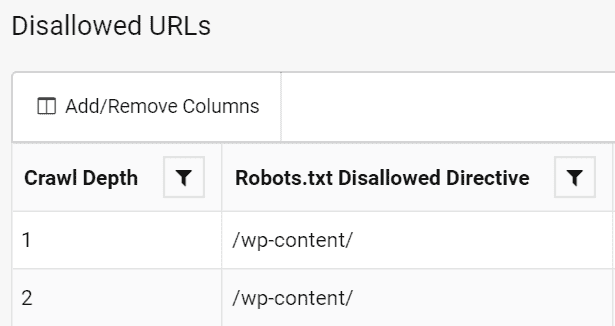

Clicking through from the Hint to the URL List will show you the robots.txt disallow rule that was triggered for the URL.