The Gemini Mistake That Made My Page Disappear & the Professional Analysis That Brought It Back

Published May 5, 2026

Today, Yitzhak Fayzak shares a real-world SEO case study of how a Gemini AI recommendation caused one of his pages to drop from Google, and how his human professional analysis of Search Intent fixed it…

In this article, I want to share a real case from my own website. One recommendation from Gemini caused one of my most valuable pages to almost disappear from Google’s search results overnight.

This wasn’t a bug, and it wasn’t an algorithm update. On the surface, the recommendation actually made sense. But in practice, it damaged the one thing Google has been emphasizing more than anything in recent years: matching user search intent.

What happened here reveals a much bigger issue. Not a technical flaw, but a real disconnect between how AI systems analyze content and how real users search, think, and respond.

And this isn’t some rare edge case. In 2026, with AI‑generated content flooding the web and automated advice becoming the default, this gap is turning into a genuine risk for SEOs.

In the next sections, I’ll show how three of the most advanced AI models completely missed the root cause and how a simple, manual SEO analysis uncovered what they couldn’t see.

Contents:

Why SEOs rely too much on AI

Over the last few years, more and more SEO professionals have begun to rely on AI tools, often almost automatically, without pausing to examine whether the recommendations truly align with what the user is looking for.

My approach has always been different.

Throughout the years, I have made it a point to document every single change I made on a page: content edits, structural shifts, even minor phrasing tweaks. This documentation allowed me to identify real patterns to understand what makes a page rise, what makes it fall, and exactly how a small fix can bring it back on track. This manual tracking is what gave me true control over my rankings not through dashboards or automations, but through a deep understanding of the process itself.

This is exactly why the industry’s increasing reliance on AI is so concerning.

AI models are excellent at identifying patterns, but they don’t understand the full context of a page, its history, the reasoning behind previous changes, or how users actually respond to the content.

And what happened to me is not an isolated incident. Just this week, SEO expert Lily Ray shared a case where AI systems confidently presented a "Google algorithm update" that never actually took place. According to her analysis, this wasn't just a one-off error, but a process where AI-generated misinformation is published, cited, fed back into the systems, and finally established as "fact." She calls this the "AI Slop Loop" a cycle where inaccurate information is duplicated and reinforced until it becomes a dominant narrative across all AI platforms.

The implication is clear: we aren't just dealing with random glitches, but with a mechanism that can produce and spread misinformation on a massive scale. When such information begins to be perceived as reliable even by professionals relying on AI recommendations without human oversight becomes a genuine risk.

My case study illustrates this exact point: what happens when a recommendation that looks logical actually damages a page and how only manual analysis can uncover the real problem and fix it.

The Gemini recommendation: A small change with a big impact

The page at the center of this case is my primary guide: What is SEO. For a long time, it was a steady performer, with rock‑solid evergreen content that consistently sat around position 28. It generated thousands of impressions and maintained stable visibility, but it remained stuck on the third page. My goal was clear: push it into the Top 10.

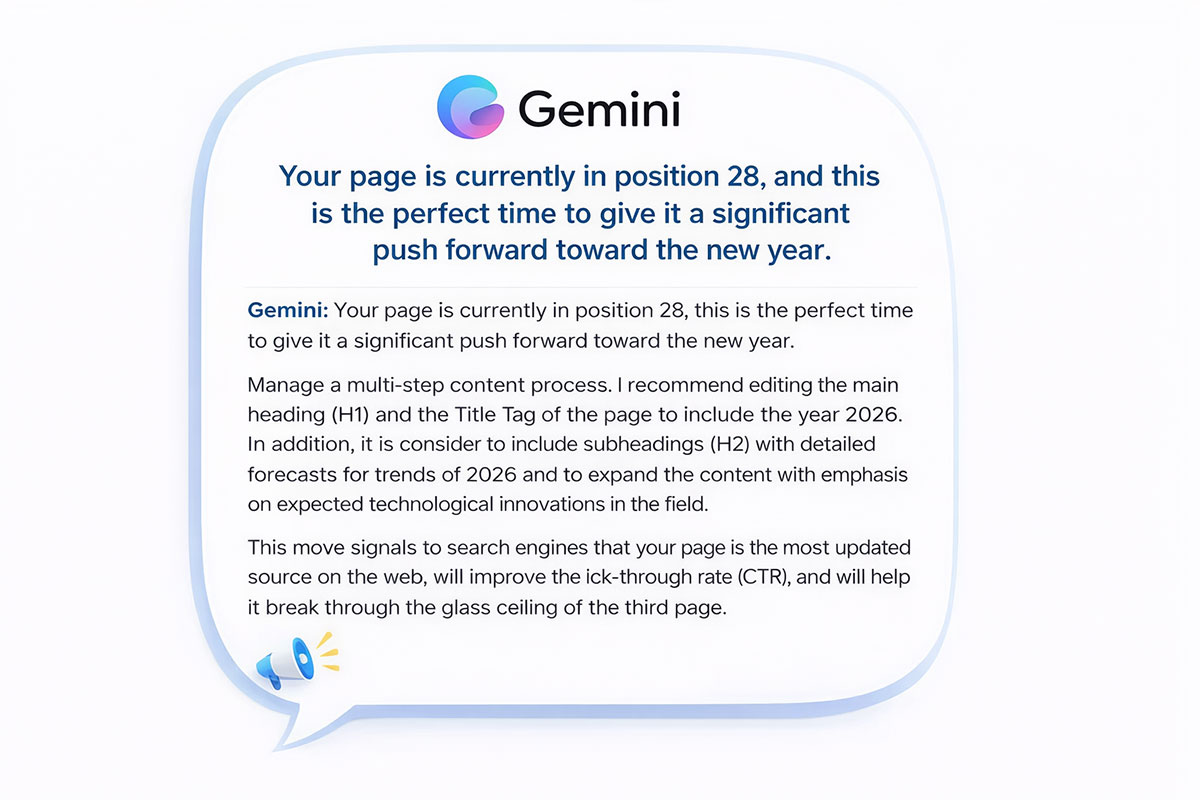

I turned to Gemini for directions. Its recommendation wasn’t just a minor tip; it was a full action plan that sounded structured, logical, and aligned with common SEO principles. Looking at the recommendation itself, it’s easy to see why it felt so convincing.

What Gemini actually recommended

Gemini analyzed the page’s position and concluded that this was the “perfect time to give it a push forward.” The plan focused on two main changes:

Adding the year: Updating the H1 and Title Tag to include “2026.”

Structural changes: Adding new H2 subheaders focused on 2026 trends and technological developments.

The logic behind the recommendation was simple and persuasive. According to Gemini, adding the year would signal to Google that the page is one of the most up‑to‑date sources. It also claimed this would improve CTR and help the page “break through the glass ceiling of the third page.”

It sounded right. And when you’re working with a tool built by Google, it’s easy to assume it has a deeper understanding of the algorithm.

At that moment, nothing in the recommendation suggested it could cause harm. On the surface, it looked like a smart move, exactly the kind of change that could help a stable page finally move upward.

But what happened next was the opposite of what I expected.

The crash: What happened after implementation

I implemented Gemini’s recommendations and updated the page. In less than 24 hours, it was clear that something had changed, but not in the direction I expected.

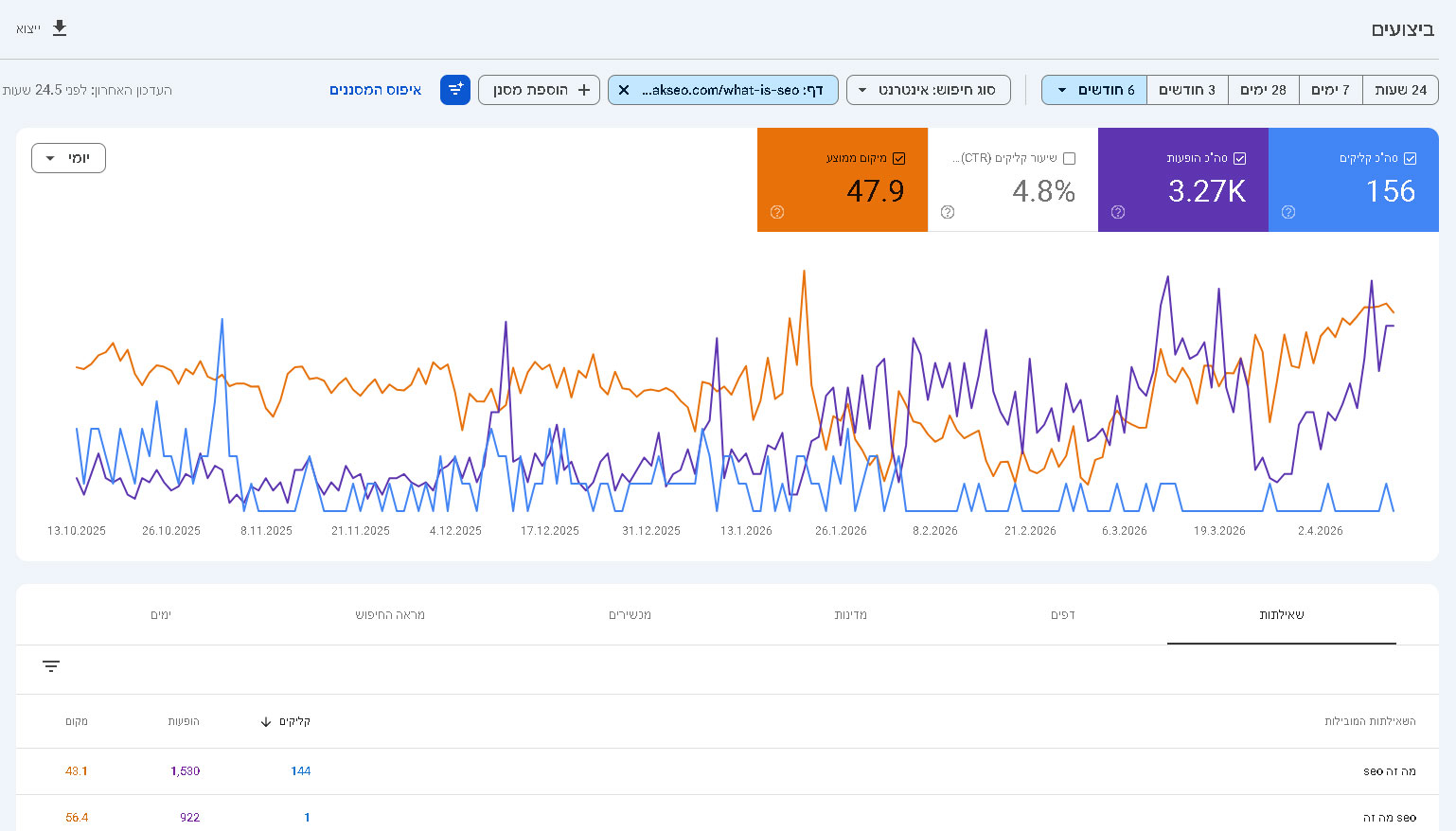

To understand what actually happened, I looked at two types of data: the general rankings over time, alongside the precise daily data points from Google Search Console (GSC). This is where the real picture began to emerge.

January 19th: Before the Change

While the page appeared stable around Position 28 in general tracking, the daily GSC data showed even stronger performance, an average position of 19.8.

January 21st: After Implementing the AI Recommendations

Within 48 hours of making the changes, the page crashed to Position 67.7, a drop of nearly 50 positions in a very short window.

February 1st: The Bottom

The decline continued until it hit Position 79.1, and the page nearly vanished from search results for its primary keywords.

But this wasn't just a drop in rankings; it was a Reclassification. The page began ranking #1 for the query "What is SEO 2026", a new intent created by the changes. Meanwhile, it lost all visibility for the primary and essential keyword, "What is SEO."

In other words, the page didn't move forward, it was diverted into a different, far less relevant search intent.

Analysis: As seen in the graph, on January 21st, immediately after the content update, the page experienced a sharp drop in both impressions and clicks, falling nearly to zero. This clear 'dip' demonstrates the immediate negative impact of the AI-driven recommendation.

Google Search Console data for the primary query: The orange line shows the average position crashing to 72.8, reflecting the ranking drop observed during manual SERP tracking (position 79).

Why 3 AI models failed to find the solution

After the ranking drop, I decided to analyze the case systematically. I presented the same data (the Search Console data, the content changes, and the URL) to three leading AI models.

Despite having access to advanced data and capabilities, not a single one identified the true cause of the decline.

1. Gemini’s analysis

Since Gemini is connected to Google’s ecosystem, I expected it to recognize the connection to the changes I had implemented. However, it focused on E-E-A-T and technical authority. It recommended strengthening backlinks and adding Schema Markup, assuming the problem was related to how the page was understood. The problem wasn’t about understanding. It was about classification.

2. ChatGPT’s analysis

ChatGPT ignored the content changes and focused on structural aspects. It suggested changing the site hierarchy, moving the page closer to the root domain, and adding internal links. The assumption was that this was a structural issue, even though the site structure had remained unchanged.

3. Claude’s analysis

Claude assumed it was a competitive issue. It recommended expanding the content, adding more topics, and improving the user experience. In other words, adding more depth, at a time when the page was already overloaded with content that did not align with search intent.

Summary

All three models focused on the "How", how the page was built, how it was presented, and its authority.

But they missed the "What", what the page was actually communicating to Google and its users.

The result was three technical recommendations that failed to address the root cause. The solution, in this case, was not technical, it was a fundamental understanding of Search Intent.

The professional analysis: AI logic vs search intent

The failure in this case wasn't technical but contextual.

Gemini followed a simple logic: adding a year and updating phrasing signal freshness and relevance. This approach can work well for content that is updated frequently or for trending topics, but it does not apply the same way to evergreen pages.

In this case, the changes didn't just update the page. They also changed how it was perceived. Instead of appearing as a general guide to SEO, the page began to look like content focused on a specific timeframe, such as “SEO 2026.”

This shift changed how Google classified the page.

Why Google rejected the change

Narrowing relevance: For a broad query like “What is SEO,” Google prioritizes stable and timeless explanations. Adding a year narrowed the page's relevance and shifted it toward a much narrower query.

Search intent conflict: Users searching for “What is SEO” expect a clear and basic definition. After the update, the page presented something different. Content focused on trends or predictions. This gap between user expectation and actual content led to the drop in rankings.

The central insight: The problem wasn’t understanding the content. It was a classification. The page was no longer aligned with the search intent of its main query.

When to use a year and when not to

It is important to clarify that the issue was not the use of a year itself, but the context in which it was applied.

There are cases where adding a year is not only appropriate, but necessary. For example, content that is updated frequently, product reviews, comparisons, trend-based topics, or fields with clear version cycles.

In these cases, adding a year can improve relevance, increase CTR, and better match search intent.

However, for broad and foundational queries like “What is SEO,” the intent is completely different.

The user is not looking for a version tied to a specific year, but for a clear, stable, and timeless explanation.

Adding a year in this context does not improve the page. It changes how both users and search engines interpret it.

In this case, that shift was enough to move the page into a different and less relevant search intent category.

Conclusion: AI is a tool, not an expert

This case highlights a crucial point for SEO in 2026. Artificial intelligence can assist in the SEO process, but it cannot replace human judgment.

AI models are excellent at analyzing data and identifying patterns, but they lack the ability to understand context. They struggle to interpret search intent and the nuances of user behavior.

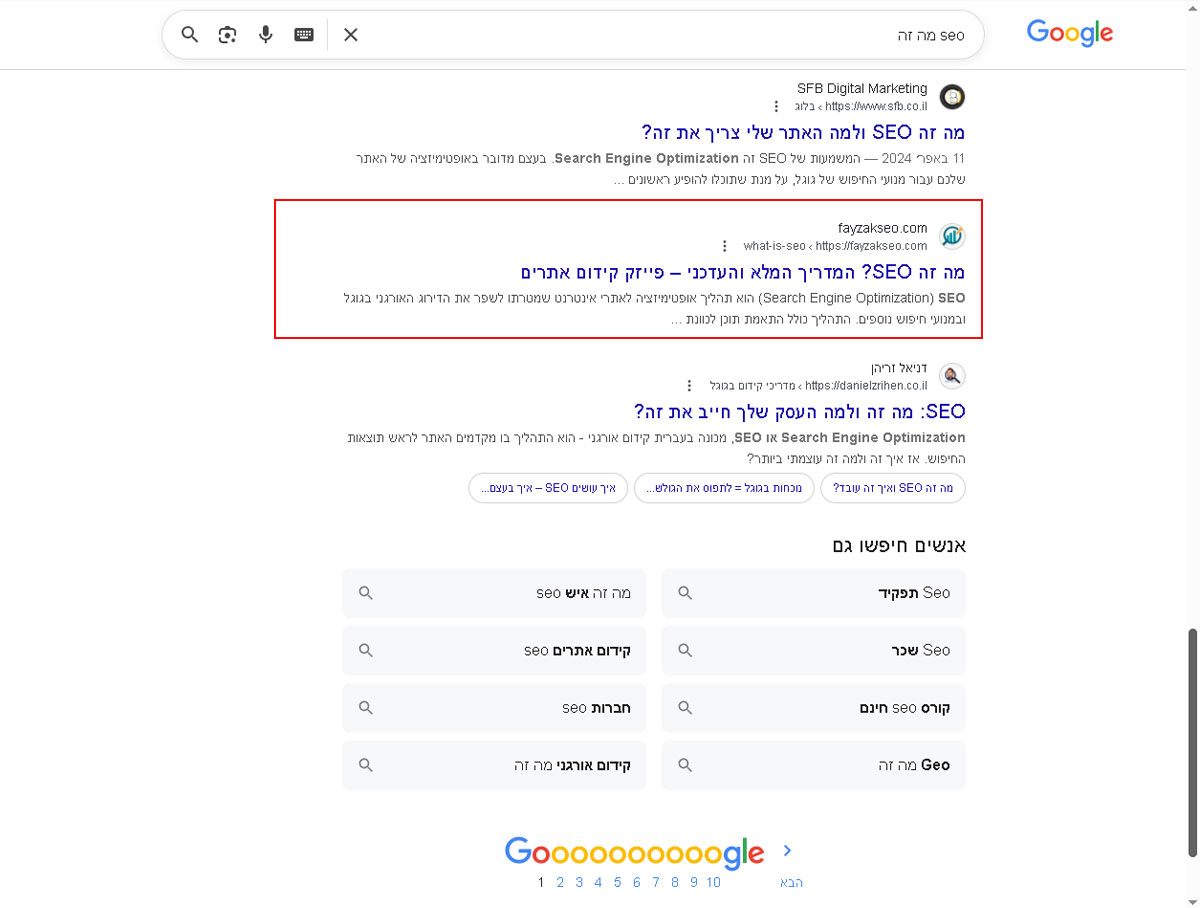

After applying manual corrections, removing the seasonal signals and restoring the focus to stable, evergreen content, the results were clear.

The page didn’t just recover. It exceeded its original performance. It reappeared in search results, climbed to the second page, reached position 17, and continues to improve.

The takeaway for SEO professionals is simple. AI is a powerful tool, but it should not be making strategic decisions.

Human expertise, the understanding of user intent, and the ability to interpret context remain the most significant competitive advantages.

Google’s algorithm is designed to serve humans. Ultimately, it still takes a human to understand what they are truly looking for.

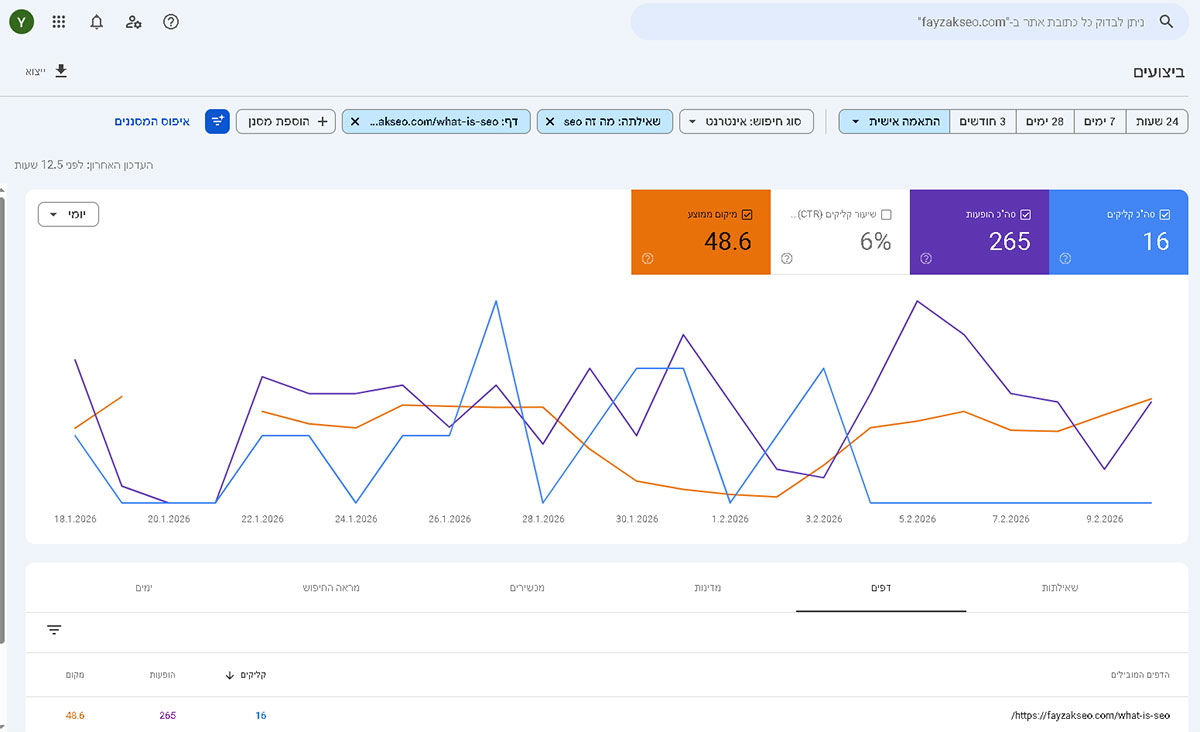

Residual impact: Why the AI signal still lingers

Even after restoring the page’s original intent, the effects of the AI-driven changes didn’t fully disappear.

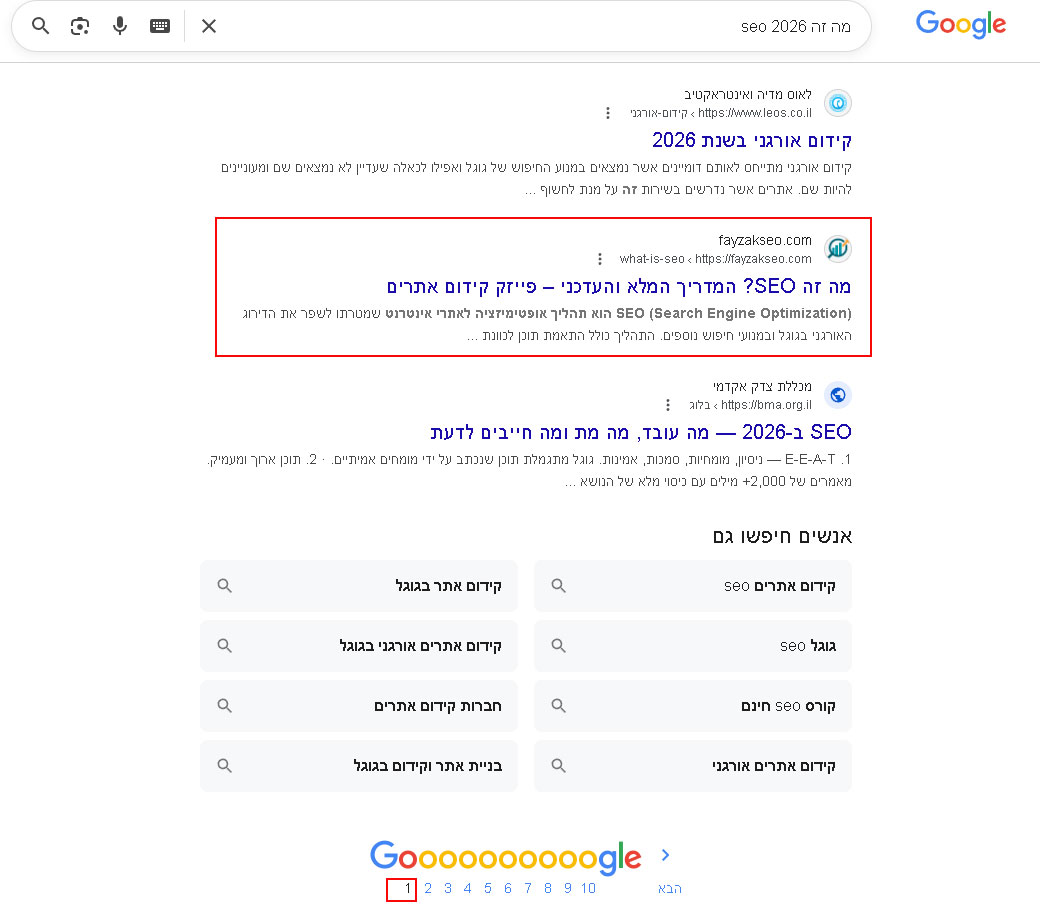

As shown in the latest anonymized check, Google still ranks the page as a top result for the query “What is SEO 2026.”

This is a clear example of intent misclassification.

While the page has recovered and climbed back to position 17 for its main, high-volume query, Google still partially associates the URL with the seasonal intent introduced by the update.

This highlights an important point for SEOs. It is relatively easy to shift how a page is classified, but much harder to fully reverse that signal and restore its original positioning.

Beyond recovery: Reaching the top 10

The final results exceeded my expectations. After restoring the original evergreen intent, the page didn’t just recover, it moved into the Top 10, reaching position 7 for the main query “What is SEO,” including variations such as “SEO מה זה.”

This improvement highlights an important point. Once the page was realigned with the correct search intent, Google was able to reassess its relevance and reward it accordingly.

Yitzhak is a veteran SEO expert with over 18 years of experience in website building and 31 years in sales. He is the owner of Fayzak SEO, specializing in E-E-A-T and human-centric optimization. He is also a Jiu-Jitsu practitioner, bringing a competitive edge to the world of search.

Articles for every stage in your SEO journey. Jump on board.

Related Articles

Beyond Keywords: Designing Empathy-Based Ecommerce Architectures

Beyond Keywords: Designing Empathy-Based Ecommerce Architectures

JavaScript SEO in the Age of AI: Will Kennard Answers Your Questions

JavaScript SEO in the Age of AI: Will Kennard Answers Your Questions

Your AI Assistant Is Biased: Why & How To Write Prompts Mindfully

Your AI Assistant Is Biased: Why & How To Write Prompts Mindfully

Sitebulb Desktop

Sitebulb Desktop

Find, fix and communicate technical issues with easy visuals, in-depth insights, & prioritized recommendations across 300+ SEO issues.

- Ideal for SEO professionals, consultants & marketing agencies.

Try our fully featured 14 day trial. No credit card required.

Try Sitebulb for free Sitebulb Cloud

Sitebulb Cloud

Get all the capability of Sitebulb Desktop, accessible via your web browser. Crawl at scale without project, crawl credit, or machine limits.

- Perfect for collaboration, remote teams & extreme scale.

If you’re using another cloud crawler, you will definitely save money with Sitebulb.

Explore Sitebulb Cloud Yitzhak Fayzak

Yitzhak Fayzak