How Walker Sands used Sitebulb to fix JavaScript issues and reverse a huge drop in indexed pages

with Scott Zimmerman, SEO Lead Analyst

with Scott Zimmerman, SEO Lead Analyst

Walker Sands is an award-winning PR and marketing agency that emphasizes collaboration to bring PR and digital services together. We spoke with SEO Lead Analyst, Scott Zimmerman, on his approach to tackling a deep technical issue for one of their clients.

When Walker Sands began helping a large B2B client in the predictive analytics/machine learning space, their brief was to increase their lead generation for specific and highly competitive verticals. However, there was a problem: they had suffered a huge organic traffic decline due to a dramatic drop in indexing.

At its worst, the site was down to 9 URLs in Google's index, 7 of which were PDFs. Scott needed to understand what had caused the indexing issue and fix it as soon as possible, before they could even think about growth.

Scott's first port of call was to check Google Search Console, to see if they had received any messages, and to understand the extent of the indexing issue.

When testing rendering in Search Console, the response was extremely alarming:

The issue was immediately apparent - if Googlebot cannot render the content, it can't access the content. This means that it can't find text content or metadata (which it needs for indexing) or internal links to other pages on the site (which it needs for crawling).

Scott turned to Sitebulb to help understand why Googlebot was struggling to render the content. Sitebulb supports crawling and rendering JavaScript, and has a range of reports that help analyse the results. In particular, Scott made use of the 'Live View' option to compare rendered vs source HTML.

“Sitebulb’s ability to crawl with headless Chrome made it easier to identify rendering issues, and the rendered vs response difference in Sitebulb was huge”

Using a combination of Sitebulb reports, Google's Mobile Friendly Test, Page Speed Insights, and Lighthouse, Scott was able to:

With this deep understanding of the issues, Walker Sands rolled out a series of technical changes to address them, ensuring Googlebot was now able to correctly render and interpret the DOM, and extract the page content.

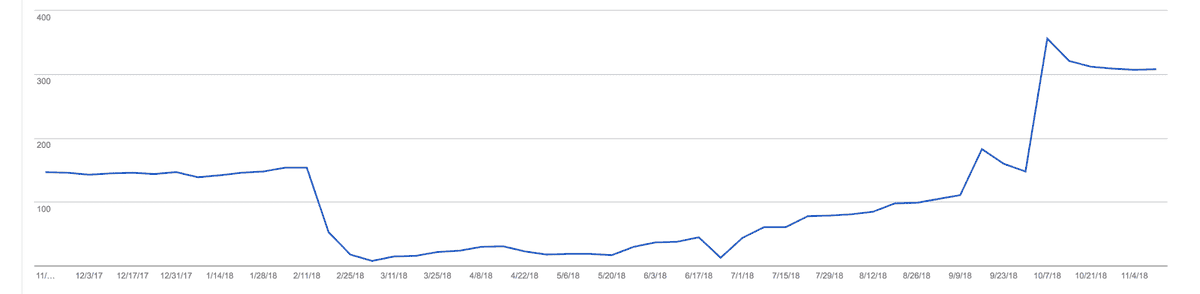

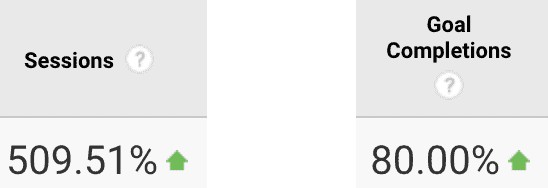

The implemented changes quickly reversed the indexation drop, as seen in the Index Status Over Time graph from Google Search Console:

More importantly from a business perspective, these changes increased traffic to core services pages by 509% (for ranking keywords like 'predictive analytics,' 'data science' and 'machine learning'), and overall leads generated by 80%.

The results speak for themselves: by eliminating the fundamental technical problems that were preventing indexing, Walker Sands were able to get the website back on track and start increasing lead generation.

Scott had this feedback on how Sitebulb was invaluable in helping Walker Sands on this project:

"Oftentimes tackling very technical SEO issues can be a scary mountain to climb. In this case, a few more weeks and our client would have been left with only one or two pages left in the index. Sitebulb proved invaluable during the audit process. When the audit took place, there were very few tools that illustrated the difference between rendered content and the HTML document, and we needed to evaluate the difference at scale.

Without the analysis provided by Sitebulb, getting to the bottom of the problem would have taken much longer, and reversing the issue quickly was key to getting organic business results back on track".

Find, fix and communicate technical issues with easy visuals, in-depth insights, & prioritized recommendations across 300+ SEO issues.

Get all the capability of Sitebulb Desktop, accessible via your web browser. Crawl at scale without project, crawl credit, or machine limits.