Sitebulb Release Rants

Transparent and sweary Release Notes for every Sitebulb update. Critically acclaimed by some people on Twitter. Written by CEO Patrick Hathaway.

Reader discretion is advised.

Transparent and sweary Release Notes for every Sitebulb update. Critically acclaimed by some people on Twitter. Written by CEO Patrick Hathaway.

Reader discretion is advised.

August 2025

Up to now, Sitebulb's 'crawl limit' has been calculated on all pages crawled - which would include external links and page resource URLs, in addition to internal HTML URLs.

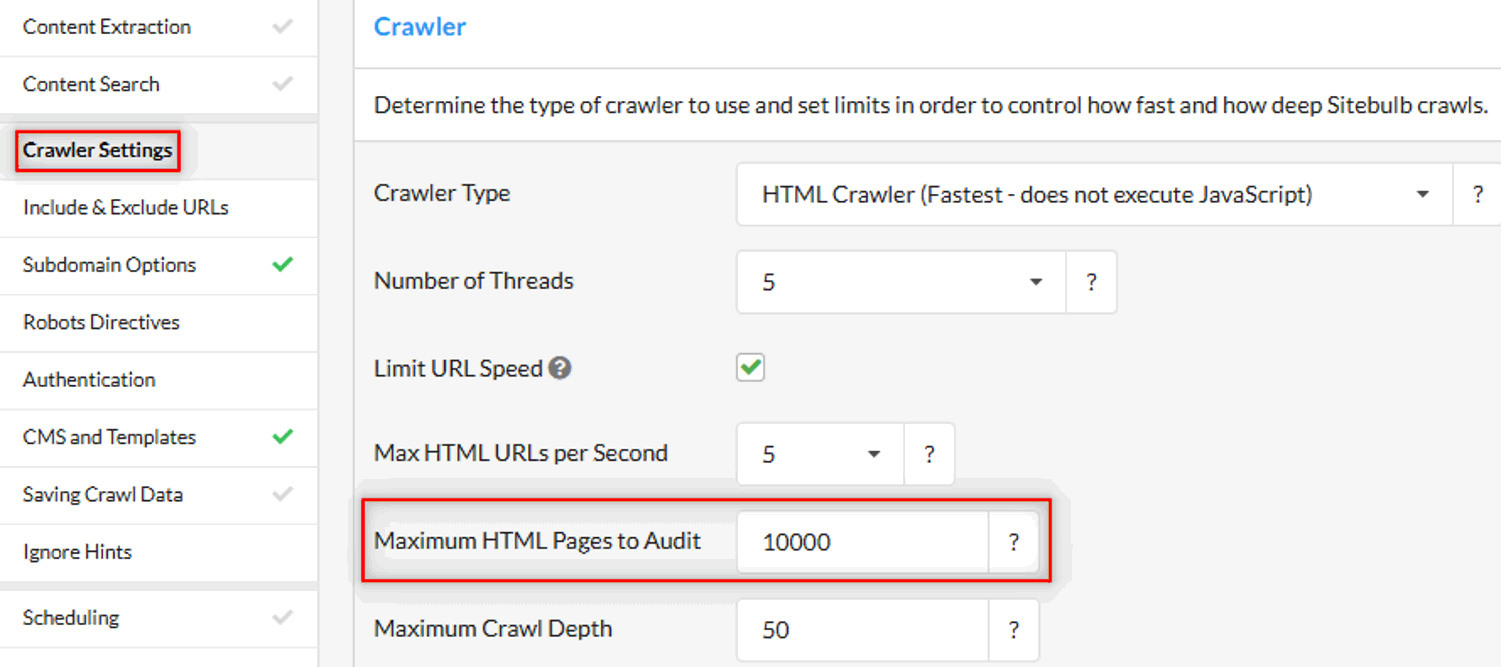

The limit I'm referring to is found in the 'Crawler Settings':

But most folks base their understanding of 'how big is my website?' only on internal HTML URLs (i.e. unique pages) and so our implementation of these limits was confusing. As an example, you might have an ecommerce store with about 10,000 pages - based on around 9,000 product pages and 1,000 other pages (categories, subcategories, blog, etc...). But if you run a crawl, Sitebulb could easily find another 1000 external URLs, and maybe 50,000 page resource URLs (images, CSS, JavaScript etc...).

So if you set a crawl limit of 10,000, you might actually find that the crawl would stop when only a couple of thousand internal HTML pages had been crawled (as the rest had been external links or page resources).

While no one actually complains at us about these sort of things, we know how annoying they must be for day-to-day usage. So we completely changed our philosophy on how we calculate it, so it's now based entirely on the number of HTML pages crawled.

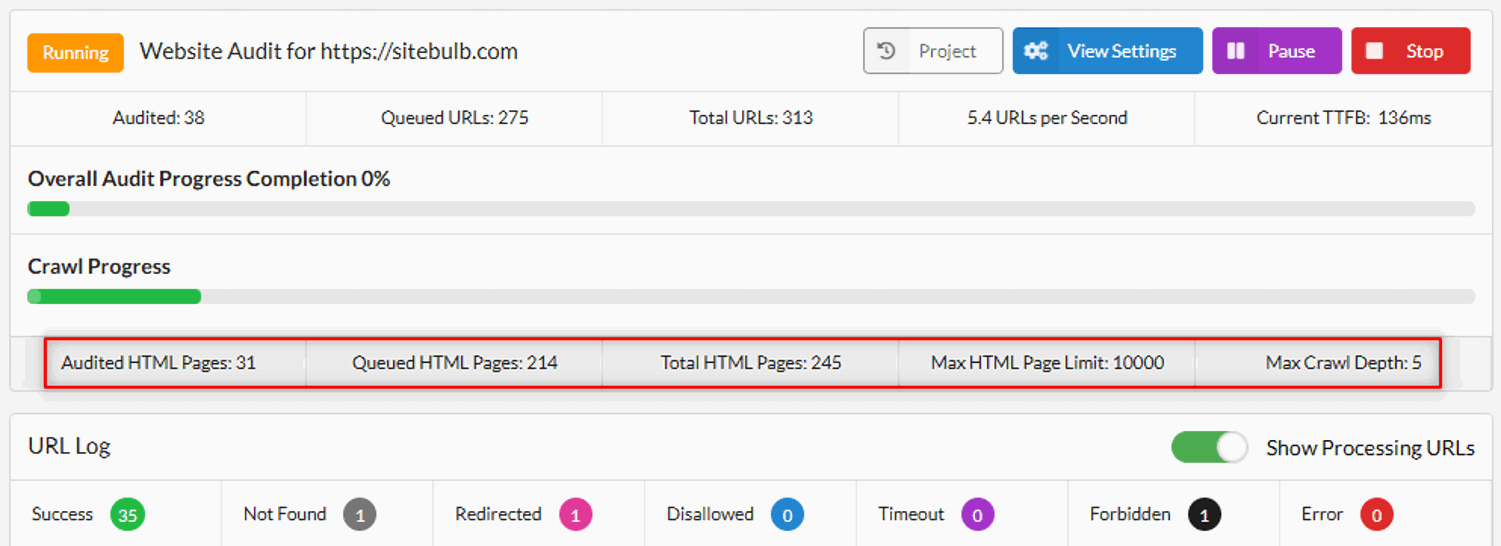

We also updated the Crawl Progress UI to add more clarity around how many HTML pages have been crawled or are due to be crawled:

In practice, what this means is that all of Sitebulb's plans have become more generous in terms of 'how many pages you can crawl', and it should be easier to set appropriate crawl limits as there is less guesswork involved.